AWS Clean Rooms: Secure Data Collaboration Without Sharing Raw Data

Kajanan Suganthan

1.Introduction to AWS Clean Rooms

In today's data-driven world, businesses increasingly rely on data collaboration to generate insights, improve decision-making, and drive innovation. However, sharing data across organizations often comes with significant privacy, security, and compliance risks. AWS Clean Rooms addresses these challenges by providing a secure, managed service that allows multiple organizations to collaborate on datasets without exposing sensitive or proprietary information. This ensures that businesses can unlock valuable insights while maintaining robust data privacy controls.

2. Key Features of AWS Clean Rooms

2.1 Secure Data Collaboration

AWS Clean Rooms enables multiple parties to perform collaborative data analysis while ensuring that no raw data is directly shared between participants. This is achieved through privacy-preserving cryptographic computing techniques, which allow insights to be extracted without exposing underlying datasets.

Privacy-preserving computations: Data remains encrypted, and cryptographic methods ensure that only aggregated results are accessible.

No raw data exchange: Organizations can work together on common datasets without needing to transfer, duplicate, or reveal sensitive data.

Granular collaboration controls: Each participant retains control over their data and can enforce security measures based on their own compliance policies.

2.2 Customizable Query Controls

To maintain strict governance over shared data, AWS Clean Rooms allows data owners to configure fine-grained access controls and query policies. These controls help restrict how data can be queried and what information is returned.

Policy-based query restrictions: Data owners can define specific rules to control which queries are allowed and what kind of results can be generated.

Selective data visibility: Organizations can limit access to only relevant data attributes, ensuring that sensitive fields remain protected.

Data masking and aggregation: AWS Clean Rooms can enforce privacy techniques such as k-anonymity and differential privacy to prevent data re-identification.

2.3 Integration with AWS Analytics Services

AWS Clean Rooms is designed to work seamlessly with other AWS data services, enabling a streamlined workflow for collaborative data analysis.

AWS Glue and AWS Lake Formation: These services facilitate data cataloging, governance, and transformation, making it easier to prepare datasets for use in AWS Clean Rooms.

Amazon S3: As a core data storage service, S3 enables organizations to securely store and manage the data used in AWS Clean Rooms.

SQL-based queries: Users can run SQL queries to analyze combined datasets in a familiar and efficient manner without compromising privacy.

Integration with AWS Identity and Access Management (IAM): IAM policies and roles allow organizations to enforce strict access control and permissions.

2.4 Compliance and Security

Security and compliance are critical when dealing with sensitive data, and AWS Clean Rooms is built with industry-leading security measures to meet stringent regulatory requirements.

End-to-end encryption: Data remains encrypted throughout its lifecycle, both at rest and in transit.

Regulatory compliance: AWS Clean Rooms adheres to major industry regulations, including:

General Data Protection Regulation (GDPR)

Health Insurance Portability and Accountability Act (HIPAA)

California Consumer Privacy Act (CCPA)

Financial Industry Regulatory Authority (FINRA)

Auditing and monitoring: AWS Clean Rooms supports detailed logging via AWS CloudTrail, allowing organizations to track data access and query history for compliance purposes.

2.5 No Data Movement Required

A major advantage of AWS Clean Rooms is that it eliminates the need to move data between parties, reducing exposure risks and ensuring data sovereignty.

Data remains within its original environment: Organizations retain full ownership and control over their datasets.

Minimized security risks: By preventing unnecessary data transfers and duplications, AWS Clean Rooms mitigates the risks of data leaks or unauthorized access.

Scalability and efficiency: Businesses can quickly onboard partners and run secure analyses without complex data-sharing agreements or infrastructure overhead.

3. Use Cases for AWS Clean Rooms

AWS Clean Rooms is particularly beneficial in industries that require secure data collaboration, such as finance, healthcare, and advertising. Common use cases include:

3.1 Advertising and Marketing Analytics

Retailers and advertisers can analyze customer behavior trends without sharing raw customer data.

Helps measure the effectiveness of marketing campaigns while preserving consumer privacy.

Enables cross-company data collaboration for better targeted advertising and audience insights.

Ensures compliance with data protection regulations while maximizing analytical capabilities.

3.2 Healthcare Research Collaboration

AWS Clean Rooms allows healthcare institutions to securely collaborate on research data while ensuring patient confidentiality.

Supports secure cross-institutional clinical studies.

Facilitates the analysis of anonymized patient datasets to improve healthcare outcomes.

Ensures compliance with healthcare regulations like HIPAA and GDPR while enabling research innovation.

3.3 Financial Risk Assessment

Banks and financial institutions can collaborate on fraud detection models and risk assessments without exposing customer details.

Helps assess credit risks securely across multiple entities.

Enables financial institutions to share aggregated fraud detection insights while protecting individual customer data.

Supports regulatory compliance while enhancing industry-wide security measures.

3.4 Supply Chain Optimization

Manufacturers and suppliers can share supply chain data securely, avoiding the disclosure of proprietary information.

Enhances forecasting and inventory planning through secure data analysis.

Improves logistics coordination while maintaining data privacy.

Reduces supply chain risks by enabling better data-driven decision-making across stakeholders.

4. Pricing of AWS Clean Rooms

AWS Clean Rooms follows a pay-as-you-go pricing model, meaning you are only billed for the resources you actually use. There are no upfront costs or long-term commitments, making it a flexible option for organizations that need secure data collaboration.

4.1 Query Processing Charges

AWS Clean Rooms charges users based on the number of queries executed within a clean room. The pricing model depends on the Clean Rooms Query Processing Unit (CQRPU), which represents the computational power required to process queries.

Factors Affecting Query Costs

Query Complexity: Simple SELECT queries cost less than complex JOIN or AGGREGATE operations.

Number of Rows Processed: The more rows scanned, the higher the cost.

Data Filtering Efficiency: Filtering unnecessary data early reduces scanning costs.

Example Pricing Calculation

Scenario: A marketing agency runs 15 queries per day to analyze customer engagement data.

Each query processes 5 million rows.

AWS charges $X per 1 million rows scanned.

✅ Cost Breakdown:

Daily Query Cost: (15 × 5M) ÷ 1M × $X = $D per day

Monthly Cost: $D × 30 = $E per month

4.2 Data Scanning & Computational Usage

AWS Clean Rooms charges for data scanned during query execution. The amount of data scanned is influenced by:

Data format: Compressed and columnar formats (like Parquet) reduce scan size.

Data filtering: Queries using WHERE clauses reduce unnecessary scanning.

Partitioning: Partitioned datasets limit the amount of scanned data.

Example: Data Scanning Cost Estimation

Scenario: A financial services company collaborates with a partner, running 25 queries per day, each scanning 10 million rows.

AWS charges $Y per 1 million rows scanned.

✅ Cost Breakdown:

Daily Data Scanning Cost: (25 × 10M) ÷ 1M × $Y = $F per day

Monthly Cost: $F × 30 = $G per month

Cost Optimization Tip: Use partitioned storage to scan only relevant rows, reducing scanning costs.

4.3 Storage Costs

AWS Clean Rooms itself does not store data permanently. However, the cost of storing and managing datasets in Amazon S3 or related AWS services is an important consideration.

Typical Storage Costs

Amazon S3 Standard Storage: $0.023 per GB per month

Amazon S3 Intelligent-Tiering: $0.0125 per GB per month for infrequent access

Amazon S3 Glacier: $0.004 per GB per month for archival storage

Example: Storage Cost Calculation

Scenario: A company stores 500GB of data in Amazon S3 for use in AWS Clean Rooms.

Monthly Storage Cost (S3 Standard): 500GB × $0.023 = $11.50 per month

Monthly Cost (Intelligent-Tiering): 500GB × $0.0125 = $6.25 per month

Cost Optimization Tip: Move cold data to Amazon S3 Glacier to save costs.

4.4 Integration Costs with Other AWS Services

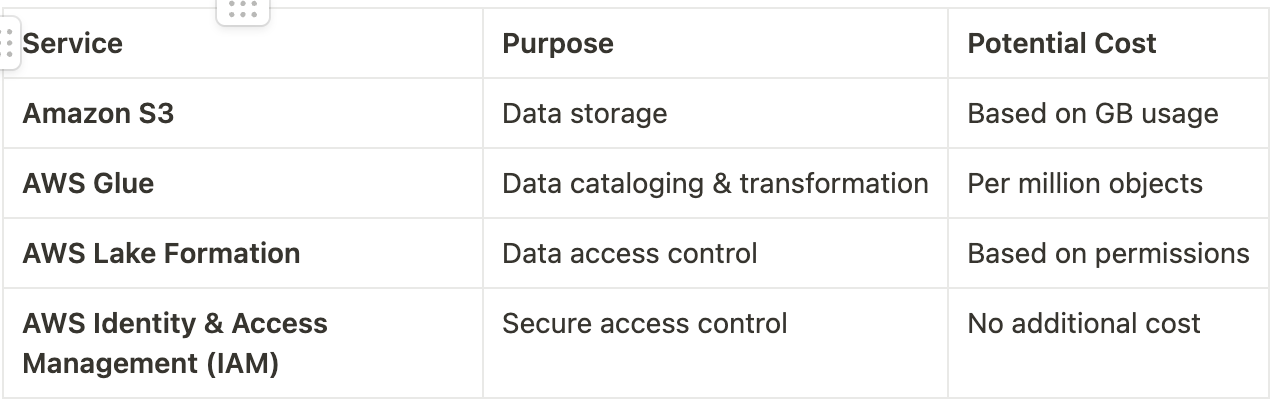

AWS Clean Rooms integrates with multiple AWS services, each with its own pricing model. You may incur additional charges based on how these services are used:

4.5 Example Pricing Scenarios

Example 1: Small-Scale Data Collaboration

A retail company runs a basic analytics collaboration with a supplier, executing 10 simple queries daily, each processing 3 million rows.

Estimated Cost Breakdown:

Query Processing Cost: 10×3M ÷ 1M × $X = $A/day

10×3M10 × 3M

Monthly Cost: $A × 30 = $B per month

Total Monthly Cost Estimate: $B

Example 2: Large-Scale Partner Analysis

A multinational company collaborates with multiple partners, running 50 complex queries per day, each scanning 50 million rows.

Estimated Cost Breakdown:

Query Processing Cost: 50×50M ÷ 1M × $X = $C/day

50×50M50 × 50M

Storage Cost (S3 Standard, 1TB): 1TB × $0.023 = $23 per month

Additional AWS Glue Usage Cost: $10 per million objects

Total Monthly Cost Estimate: $C × 30 + $23 + $10 = $D

4.6 How to Reduce AWS Clean Rooms Costs?

✅ 1. Optimize Queries to Reduce Scanned Data

Use filters (WHERE clauses) to limit data retrieval.

Use aggregations (SUM, COUNT, GROUP BY) to reduce computation.

✅ 2. Store Data in Efficient Formats

Use Parquet or ORC instead of CSV to reduce data scan size.

Partition data logically to avoid scanning unnecessary records.

✅ 3. Monitor & Set Budgets Using AWS Cost Explorer

Track Clean Rooms costs via AWS Cost Explorer.

Set alerts for query execution costs exceeding budget limits.

✅ 4. Use Amazon S3 Intelligent-Tiering for Cost Savings

Move infrequently accessed data to Intelligent-Tiering or S3 Glacier.

5. 2025 Updates and Enhancements

AWS Clean Rooms continues to evolve, introducing new features and improvements in 2025 to enhance query capabilities, security, and integration with other AWS services. These updates provide greater flexibility, improved data protection, and expanded interoperability, making AWS Clean Rooms an even more powerful tool for secure data collaboration.

5.1 Expanded Query Capabilities

AWS Clean Rooms now supports more advanced analytics functions, enabling users to perform complex data operations directly within their clean rooms.

New Query Enhancements

Advanced Aggregations: Perform more complex SUM, COUNT, AVERAGE, MEDIAN, and percentile-based calculations for deeper insights.

Window Functions: Use functions like RANK(), LEAD(), LAG(), and ROW_NUMBER() for advanced data analytics.

Pattern Matching with SQL LIKE & REGEX: Enables sophisticated filtering and text analysis within datasets.

Support for JSON & Semi-Structured Data: Improved handling of nested and structured data formats, making it easier to work with diverse data sources.

Example Use Case

A marketing firm collaborating with a retail company can now run advanced time-series analysis to track customer purchasing trends using window functions, helping them optimize ad targeting strategies.

5.2 Enhanced Security Controls

Security has been further strengthened in AWS Clean Rooms to ensure that data remains private, encrypted, and tightly controlled.

Key Security Enhancements

Improved Encryption Mechanisms:

Data is now encrypted both in transit and at rest with AES-256 encryption.

Support for customer-managed encryption keys (CMKs) via AWS Key Management Service (KMS).

Stronger Access Control Policies:

Granular permissions allow administrators to restrict access at the query level, ensuring that collaborators can only access specific datasets.

Integration with AWS IAM ensures role-based access control (RBAC) for secure data sharing.

Enhanced Data Masking & Anonymization:

On-the-fly data masking lets organizations share insights without exposing sensitive information.

Tokenization options now allow hashed identifiers instead of raw data exposure.

Example Use Case

A financial institution collaborating with an advertising partner can now use AWS Clean Rooms with data masking to analyze customer behavior trends without exposing sensitive financial data.

5.3 Integration with More AWS Services

AWS Clean Rooms now provides deeper integration with major AWS services, making it easier to combine data sources and streamline workflows.

Key AWS Service Integrations in 2025

Amazon Redshift Integration:

Users can now query data stored in Amazon Redshift directly within AWS Clean Rooms, without needing to move or duplicate datasets.

Optimized query performance using Amazon Redshift Spectrum for distributed data processing.

AWS Lake Formation Support:

AWS Clean Rooms now works natively with AWS Lake Formation, allowing organizations to securely catalog and share datasets with partners.

Automated fine-grained access control ensures only authorized queries can retrieve sensitive data.

Amazon Athena Compatibility:

AWS Clean Rooms now allows federated queries with Amazon Athena, making it easier to analyze data across S3, relational databases, and third-party sources.

Example Use Case

A healthcare research company working with pharmaceutical partners can now securely query patient records stored in Amazon Redshift, using AWS Clean Rooms without duplicating sensitive data, ensuring compliance with HIPAA and GDPR regulations.

5.4 Performance Improvements & Cost Optimization

AWS Clean Rooms has also introduced performance optimizations to improve query speed and reduce overall costs.

Optimized Query Execution Engine:

Faster query processing times reduce execution delays for large-scale analytics.

Intelligent query caching minimizes redundant data scans, reducing costs.

Cost-Saving Enhancements:

Dynamic Pricing Tiers: AWS Clean Rooms now offers lower-cost tiers for batch processing workloads.

Smarter Data Partitioning: AWS automatically optimizes dataset partitions, ensuring only relevant data is scanned, lowering compute costs.

Example Use Case

A large-scale advertising company running billions of queries per month benefits from intelligent query caching and partition pruning, reducing their AWS Clean Rooms costs by 30%.

Final Thoughts on 2025 Enhancements

With these new updates, AWS Clean Rooms is more powerful than ever, offering:

Advanced analytics functions for deeper insights

Stronger security & encryption to protect data

Seamless integrations with Amazon Redshift, Athena & Lake Formation

Optimized performance & cost-saving mechanisms

These improvements make AWS Clean Rooms a leading solution for secure data collaboration, ensuring that businesses can analyze data together without exposing sensitive information.

6. How to Get Started with AWS Clean Rooms

AWS Clean Rooms provides a secure, collaborative environment for analyzing sensitive data without compromising privacy. To begin using AWS Clean Rooms, follow these steps to set up your environment, integrate data sources, and perform secure collaborative analytics.

6.1 Prerequisites

Before you get started, ensure you meet the following prerequisites:

AWS Account: You need an active AWS account. If you don't have one, you can create an AWS account here.

IAM Permissions: Ensure that your AWS Identity and Access Management (IAM) roles have the necessary permissions. At a minimum, you will need permissions for AWS Clean Rooms, Amazon S3, AWS KMS (for encryption), and any other services you plan to use.

AWS CLI/SDK: Optional, but it’s recommended to have AWS CLI or an SDK (such as AWS SDK for Python) set up to interact with the service programmatically.

6.2 Step-by-Step Setup

Step 1: Create a Clean Room

Login to AWS Console:

Access the AWS Management Console and navigate to AWS Clean Rooms.

Initiate Clean Room Creation:

Click on the "Create Clean Room" button.

Provide a name and description for your clean room to help identify its purpose.

Configure Data Sources:

Link your data sources to the Clean Room. You can integrate Amazon S3 buckets, Amazon Redshift, or other supported services.

Specify the datasets or tables that you want to include in the clean room. Make sure they are organized and properly labeled for collaborative access.

Define Access Permissions:

Set up permissions based on roles and policies using IAM. You can create granular access controls to limit what each participant can access or query.

Choose which team members (internal or external collaborators) will be granted access to this clean room.

Set Security and Compliance Options:

Choose your encryption settings, such as using AWS KMS for customer-managed encryption keys.

Enable data masking or tokenization if you need to share anonymized insights.

Review and Confirm:

Review all your configurations and click on Create to finalize the setup.

Step 2: Integrate Data

After creating your clean room, the next step is integrating your data sources for analysis.

Integrate Amazon S3:

You can connect your Amazon S3 buckets to the clean room. Make sure the data you want to use is already stored in the correct S3 location.

Set access policies for each dataset to ensure appropriate data sharing.

Link Amazon Redshift:

If you're using Amazon Redshift as a data source, configure a Redshift data connection within your Clean Room.

Enable Amazon Redshift Spectrum for distributed query capabilities if your data is stored in S3.

Use AWS Lake Formation:

If you're leveraging AWS Lake Formation, integrate it with AWS Clean Rooms to allow for secure data sharing and access using fine-grained permissions.

Set Data Collaboration Parameters:

When setting up the data sources, you will specify who can access what data. You can also enable data transformations to preprocess data before sharing.

Step 3: Query and Analyze Data

Once your clean room is set up and data sources are integrated, it’s time to perform analytics.

Run Queries:

You can run SQL-like queries directly on the data within the clean room using Amazon Athena or Redshift Spectrum for large datasets.

Leverage advanced analytics functions such as window functions, aggregations, and pattern matching for in-depth analysis.

Collaborate with Partners:

Share the query results with authorized collaborators. Ensure that only the right team members have access to specific insights based on your access control configurations.

Use Data Masking:

If you need to mask sensitive data, enable data masking or tokenization to ensure that only non-sensitive insights are shared.

Step 4: Manage and Monitor

Monitor Activities:

Use AWS CloudTrail to monitor all activities in your clean room. CloudTrail records every access event, providing detailed logging of who accessed what data and when.

Audit Data Sharing:

Regularly audit data sharing to ensure that sensitive information is not exposed to unauthorized parties. AWS Clean Rooms offers detailed logs and insights into which datasets were accessed and by whom.

Manage Costs:

Keep track of your usage and expenses by setting up AWS Budgets and monitoring your AWS Clean Rooms usage via the AWS Cost Explorer.

Optimize performance by using features like query caching to reduce unnecessary compute costs.

6.3 Best Practices

Data Security: Always use encryption for sensitive data at rest and in transit. Enable fine-grained access control to restrict data sharing only to necessary collaborators.

Efficient Data Partitioning: Structure your data into partitions to optimize query performance and minimize costs.

Data Governance: Establish clear data governance policies to ensure that sensitive information is protected and only accessible by authorized parties.

Collaboration Guidelines: Clearly define roles and responsibilities in collaborative data analysis to avoid unauthorized access or data misuse.

7.Conclusion

AWS Clean Rooms is a powerful solution for businesses looking to collaborate on data insights securely. With its robust privacy-preserving features and seamless AWS integration, it enables industries such as healthcare, finance, and marketing to drive innovation while maintaining data security. As AWS continues to enhance its capabilities, AWS Clean Rooms remains a key tool for secure data collaboration.